Speakers

Leonard Axelsson

- Developer/Operations at Mojang

@xlson

Ville Svärd

- Consultant, programmer, architect, tester, ...

@villesv

Behaviour

Language

Perception

At the core (of agility), different kinds

Three chapters

- A story

- A tool

- Observations and benefits

A story of monitoring

Is your team performing?

getting metrics out of your team?

How about your product?

in fact, how well do you know your product?

How will you know?

feedback from users, ops, testing?

Once upon a time at

consultants, working as developer and tester

We had a problem

We wanted to settle concerns about stability and performance for a new application about to be integrated in a legacy environment

Blunt tools

Subject kept popping up, much labor to settle it (to our satisfaction) every time From the heart of the application Lack "depth" Lack reliability/repeatability Involve manual labor Provide slow feedback MBeans/JConsole => No mining, unreliable Cron/CSV/Excel => Hard to repeat, much work, error prone

A hero emerges

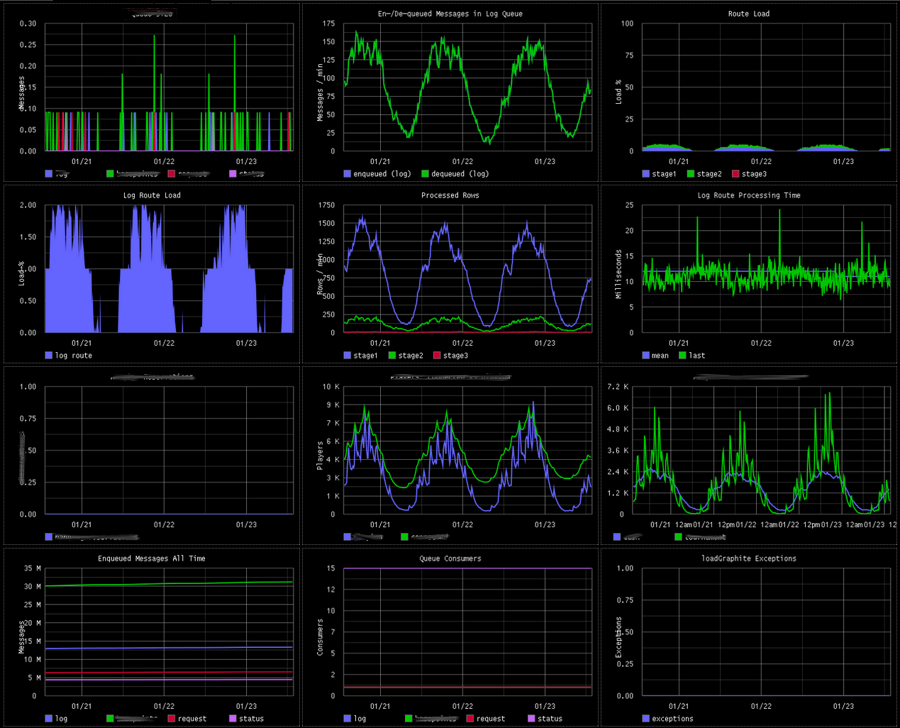

Graphite

- Set up

- Collect

- Presto!

Half a day trying it out, Three simple/relevant metrics at the end of the day, Data avalailable => pursue further!

Water cooler effect

an immediate side effect

Out of the darkness

shared understanding and language?

And so it went on

used in testing, verified improvements, helped proof the prod env.

Lone heroes are a myth

- Tools

- Environment

- Team

Monitoring proj req. by PO/Ops, Access to load testing for a long time, curious team

A tool

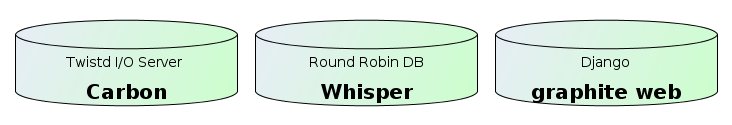

Graphite

Easy as setting up a python webapp, All OpenSource, Made to scale

"getting the data in"

Graphite does not do it for you, but it is really easy

"Hello metric"

(Python)

import time

import socket

def collect_metric(name, value, timestamp):

sock = socket.socket()

sock.connect( ("localhost", 2003) )

sock.send("%s %d %d\n" % (name, value, timestamp))

sock.close()

def now():

int(time.time())

collect_metric("meaning.of.life", 42, now())"Hello metric"

(Clojure)

(import [java.net Socket]

[java.io PrintWriter]))

(defn write-metric [name value timestamp]

(with-open [socket (Socket. "localhost" 2003)

os (.getOutputStream socket)]

(binding [*out* (PrintWriter. os)]

(println name value timestamp))))

(defn now []

(int (/ (System/currentTimeMillis) 1000)))

(write-metric "meaning.of.life" 42 (now))

[ demo time ]

Apply a function, nonNegativeDerivative, talk about composability, how different data can be combined easily

"making sense"

Instant availability

Watching changes take effect immediately

Sharing is caring...

Availability over http => huge benefit

Data retention

Historic data for comparison and regression

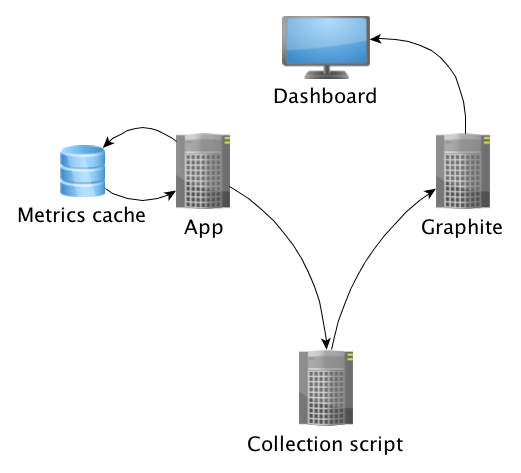

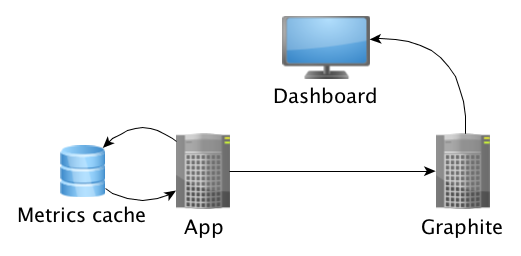

Pulling metrics

Pushing metrics

Observations

Thirst for knowledge

need for metrics, notice missing info, eagerness to retest/deploy, experimentation

Familiarity with behaviour

easily spot trends/errors, don't panic, spotted prod. HW problems

Increased confidence

outspoken within the team, outside (graph as fact), history as reference

Influence on design

at least one feature not impl., metrics show when/where improvement needed (or not)

A tool for testing

data as reference when "composing" is easy!, regressions using historic data

Starting early

start in development, silos, ready for prod.

Collaborative benefits

Nurturing conversation with data

The water cooler effect

- Graphs attract audience

- Natural talking matter

The team

- Curing blindness

- Making better decisions

(with data as guide)

Stakeholders

- Sparking curiosity

- Shared understanding

-Oh what is that? Quite ready yet? Shared decision making! what influences perf., associate names with data

Managers

- Information as leverage

changing procedures, investment in infrastructure

Behaviour

Language

Perception

We started with a monitoring tool, we got so much more

Get to know your application

today!

Ville Svärd

ville.svard@agical.com | @villesv

Leonard Axelsson

leo@xlson.com | @xlson

Attributions

Images

Big Brother Noc Concerns Blunt tools Gather round Thirst Confidence Behaviour Design Testing Start monitoring Demo Time

Thanks

The team at Entraction (IGT)

Mårten Gustafson